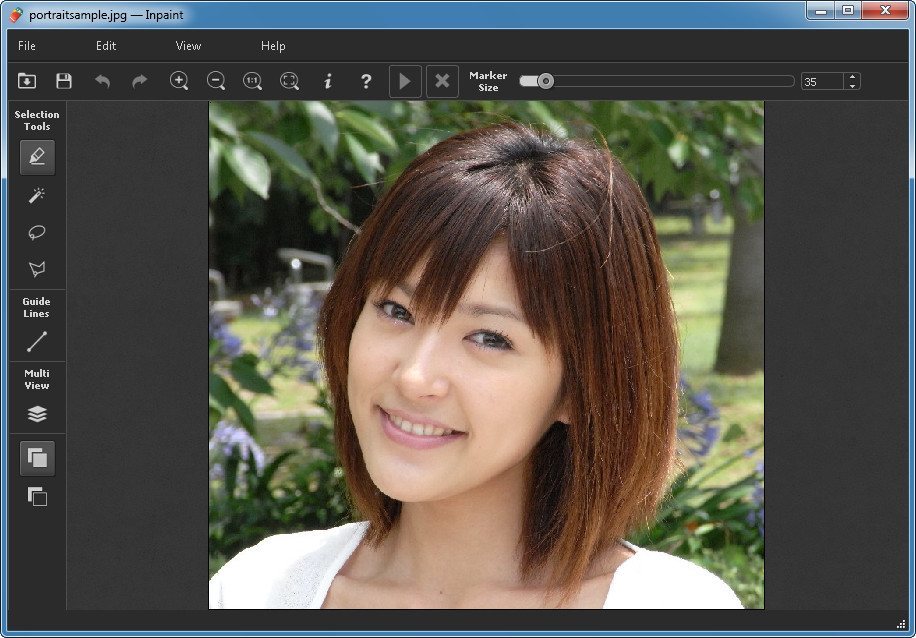

The image size needs to be adjusted to be the same as the original image. This is like generating multiple images but only in a particular area. You can reuse the original prompt for fixing defects. This is the area you want Stable Diffusion to regenerate the image. Use the paintbrush tool to create a mask. We will inpaint both the right arm and the face at the same time. Upload the image to the inpainting canvas. In AUTOMATIC1111 GUI, Select the img2img tab and select the Inpaint sub-tab. Select sd-v1-5-inpainting.ckpt to enable the model. In AUTOMATIC1111, press the refresh icon next to the checkpoint selection dropbox at the top left. To install the v1.5 inpainting model, download the model checkpoint file and put it in the folder stable-diffusion-webui/models/Stable-diffusion But usually, it’s OK to use the same model you generated the image with for inpainting. It’s a fine image but I would like to fix the following issuesĭo you know there is a Stable Diffusion model trained for inpainting? You can use it if you want to get the best result. , (long hair:0.5), headLeaf, wearing stola, vast roman palace, large window, medieval renaissance palace, ((large room)), 4k, arstation, intricate, elegant, highly detailed (Detailed settings can be found here.) Original image I will use an original image from the Lonely Palace prompt:

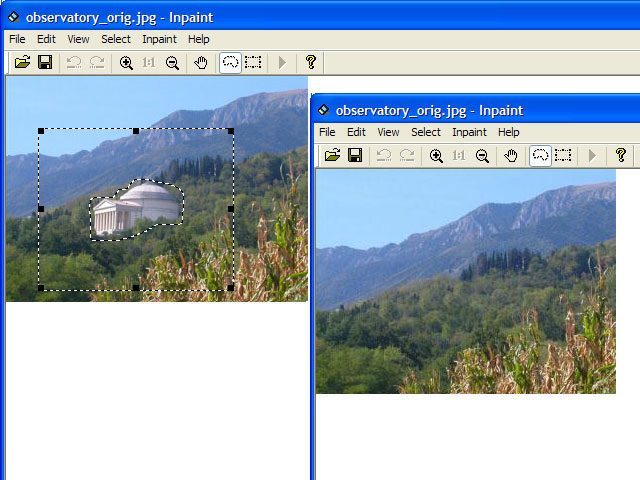

In this section, I will show you step-by-step how to use inpainting to fix small defects. See my quick start guide for setting up in Google’s cloud server. I created a corresponding strokes with Paint tool.We will use Stable Diffusion AI and AUTOMATIC1111 GUI. My image is degraded with some black strokes (I added manually). We need to create a mask of same size as that of input image, where non-zero pixels corresponds to the area which is to be inpainted. This algorithm is enabled by using the flag, cv.INPAINT_NS. Once they are obtained, color is filled to reduce minimum variance in that area. For this, some methods from fluid dynamics are used. It continues isophotes (lines joining points with same intensity, just like contours joins points with same elevation) while matching gradient vectors at the boundary of the inpainting region. It first travels along the edges from known regions to unknown regions (because edges are meant to be continuous). This algorithm is based on fluid dynamics and utilizes partial differential equations. Inpainting"** by Bertalmio, Marcelo, Andrea L. Second algorithm is based on the paper **"Navier-Stokes, Fluid Dynamics, and Image and Video This algorithm is enabled by using the flag, cv.INPAINT_TELEA. FMM ensures those pixels near the known pixels are inpainted first, so that it just works like a manual heuristic operation. Once a pixel is inpainted, it moves to next nearest pixel using Fast Marching Method. More weightage is given to those pixels lying near to the point, near to the normal of the boundary and those lying on the boundary contours. Selection of the weights is an important matter. This pixel is replaced by normalized weighted sum of all the known pixels in the neighbourhood. It takes a small neighbourhood around the pixel on the neighbourhood to be inpainted. Algorithm starts from the boundary of this region and goes inside the region gradually filling everything in the boundary first. Consider a region in the image to be inpainted. Both can be accessed by the same function, cv.inpaint()įirst algorithm is based on the paper **"An Image Inpainting Technique Based on the Fast Marching

Several algorithms were designed for this purpose and OpenCV provides two of them.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed